Spotlight

- Generative AI tools have crossed a capability threshold that makes synthetic media indistinguishable from authentic footage at the speed of conflict

- Content provenance and detection technologies are maturing but not yet deployed at scale in the region

- Platform-side content moderation infrastructure has contracted at the same time that AI generation tools have become widely accessible

- Technical standards like C2PA offer a near-term, implementable layer of trust for digital media

Introduction

In the first seventy-two hours of the conflict, AI-generated synthetic media circulated across social media platforms faster than existing detection and verification infrastructure could process it. Fabricated satellite imagery, produced by manipulating authentic Google Earth data with generative AI tools and confirmed via Google’s SynthID watermark detector, accumulated millions of views on X before being identified. Misattributed archival footage was re-captioned and reshared on Instagram, reaching six-figure view counts before geolocation analysis traced it to an unrelated event. A fully AI-generated video depicting an attack on a Gulf landmark reached over four million views, with detection tools such as Hive Moderation flagging it at 99.9 percent AI probability, but only after it had already spread widely. In each case, the generation-to-detection lag created a window in which synthetic content was consumed as fact.

The speed and scale of synthetic media during this conflict reveal that detection and provenance technologies must be deployed proactively.

The speed and scale of synthetic media during this conflict reveal that detection and provenance technologies must be deployed proactively. The trivial ease of generating deepfakes combined with the increasing difficulty of monitoring the web for synthetic media and verifying authenticity is becoming a core vulnerability in modern conflict zones.

A Perfect Storm of Disinformation

The economic impact has been tangible. Gulf stock exchanges became volatile in the first weeks of the conflict with benchmark indices across the region registering notable declines. The market reflected a range of factors including energy price fluctuations, supply chain uncertainty, and broader geopolitical risk. Additionally, the inability to quickly distinguish authentic reporting from synthetic fabrications added a layer of informational uncertainty that compounded standard market movement. In this conflict-ridden environment, AI-generated synthetic information and targeted disinformation campaigns have been pouring fuel into the fire. Cyabra, an online information security firm, identified an Iranian disinformation campaign across social media platforms immediately after the start of military operations promoting AI-generated videos of Iranian missiles striking regional landmarks. The campaign deployed “recurring narratives designed to portray Iran as the dominant and victorious actor in the conflict, amplified through synchronised posting behaviour and repeated media assets distributed across large account networks.” The absence of platform-integrated detection and content provenance infrastructure has meant that synthetic media could only be addressed after it had already gone viral. This left a response window measured in days rather than hours during which fabricated content had shaped public perception and market behaviour.

Table 1. Synthetic Media Incidents Amidst the US-Israel-Iran Conflict (February-March 2026)

| Type of Synthetic Media | Claimed Target / Subject | Generation Technique & Tools | Detection Method(s) | Time to First Debunk |

| Fabricated satellite imagery | US Fifth Fleet Naval Base, Bahrain (presented as Al Udeid Air Base, Qatar) | AI manipulation of authentic Google Earth image (Feb 2025); SynthID watermark detected by Google’s tool | Google SynthID watermark detection; visual analysis | ~2–3 days (imagery circulated from ~1 Mar; debunked by 3 Mar 2026) |

| AI-generated video | Burj Khalifa, Dubai (claimed 1,800 missile strikes) | Fully AI-generated video; Hive Moderation flagged 99.9% AI probability | Hive Moderation (99.9%); Sightengine; DeepFake-O-Meter | ~2 days (posted 2 Mar; debunked by 3–4 Mar 2026 by Lead Stories |

| Misattributed archival footage | US airbase, Saudi Arabia | Recycled footage from July 2024 Israeli airstrike on Hudaydah port, Yemen; re-captioned as Iranian strike | Geolocation via visible features (UNICEF roof marking, adjacent building); matched to satellite imagery of Hudaydah port by Full Fact | ~3 days (posted ~28 Feb; debunked by 3 Mar 2026 by Full Fact) |

| Coordinated AI-generated video campaign | Multiple Gulf landmarks and military bases (Saudi oil refineries, Burj Khalifa, US aircraft carriers) | Centrally produced AI deepfake videos; coordinated distribution via inauthentic account networks (19% fake profiles); synchronized posting, identical hashtag clusters | Cyabra behavioural analysis | Campaign identified within first week |

| AI-generated image (political deepfake) | Benjamin Netanyahu (fabricated death imagery showing body under rubble) | AI-generated photorealistic images; SynthID watermark detected; deepfake video of fake news presenter with lip-sync failure; amplified by 62 IRGC-linked fake accounts | AI or Not detector; Google SynthID | ~1–2 days for initial debunking |

Source(s): AAP FactCheck, AI or Not, Lead Stories, Full Fact, Cyabra

The speed and scale of synthetic media during this conflict expose a structural technical asymmetry: generative AI tools can produce photorealistic video in minutes, while detection, attribution, and provenance verification remain slow, fragmented, and largely manual. Fact-checkers relying on tools such as Hive Moderation, Google SynthID, and DeepFake-O-Meter were able to flag individual pieces of content with high confidence. However, this was only accomplished after that content had already accumulated millions of views. As Table 1 illustrates, the average time to first debunk ranged from two to five days, a window in which fabricated imagery had already triggered market volatility and public alarm. Closing this gap requires moving detection and provenance infrastructure upstream, from reactive and post-viral fact-checking to automated and platform-integrated verification at the point of upload and distribution.

The speed and scale of synthetic media during this conflict expose a structural technical asymmetry: generative AI tools can produce photorealistic video in minutes, while detection, attribution, and provenance verification remain slow, fragmented, and largely manual.

Three Converging Threats to Information Security

This conflict has illustrated that AI disinformation has entered a new paradigm driven by three factors. First, the capability of generative AI for producing photorealistic video has crossed a critical threshold. With recent iteration of video-generation AI models, earlier tell-tale signs and glitches in deepfakes have been largely eliminated. Within just the first two weeks of March, The New York Times was able to identify more than a hundred pro-Iran deepfakes, an unprecedented number compared to any previous conflict in the region. The danger of mass confusion was demonstrated when fake news was circulating about the death of Benjamin Netanyahu, with online commentators alleging that press conference footage showing Netanyahu alive and well were deepfakes. Tools that can be used towards such ends are now readily available thorough open-source platforms like Runway and HeyGen that are lightweight enough that anyone with a smartphone can generate deepfakes with a simple text prompt.

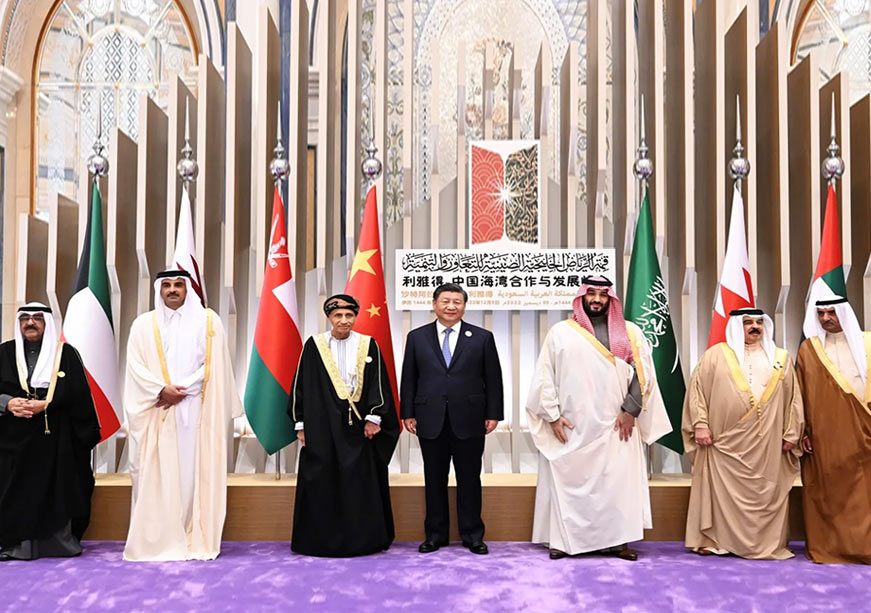

Secondly, GCC countries are no longer on the periphery but instead have become direct targets. This is crucial because Gulf populations are consuming wartime information through official and unofficial channels under active threat, making panic-inducing fake news more dangerous. Third, the governance infrastructure on big social media platforms like X and Facebook has been systematically down-sized out in recent years. After Elon Musk’s acquisition of X (then Twitter), between 2022 and 2024, the platform’s global trust and safety staff numbers have dropped by 30 percent. Meta has also reduced the number of human content moderators while adopting a AI and community notes approach similar to X. Moreover, Arabic-language content moderation was always noted to be under-funded before these cuts. For instance, Human Rights Watch documented a systematic pattern of suppression or removal of Arabic content (over 1,050 posts) on Meta’s platforms in just a two-month period in 2023. Whereas Telegram, a dominant communication platform in Gulf media landscape operates with practically no content moderation.

Closing the Gap

This generation-detection gap can be closed through three related actions by GCC governments. First, mandating digital content provenance standards such as the C2PA (Coalition for Content Provenance and Authenticity) open standard that embeds a cryptographically signed temper-evident record in digital photo and video files that traces the tools used to create it as well as any edits made to the file. C2PA has already been adopted by companies such as Microsoft, Adobe, and Samsung. Mandating C2PA credentials for licensed outlets as well as social media platforms operating in the GCC may help adding a layer of trust when waiting for manual fact-checks on online posts may take too long. Second, the establishment of rapid-response information security cells within national security apparatuses and GCC-wide cooperation structures with the remit to detect, attribute, and issues public advisories on sensitive synthetic media. Third, wartime frameworks should protect legitimate journalistic uses of AI by including sunset clauses that expire after emergency restrictions.

Mandating C2PA credentials for licensed outlets as well as social media platforms operating in the GCC may help adding a layer of trust when waiting for manual fact-checks on online posts may take too long.

Any precedent being set now may end up defining Gulf information ecosystem for a generation. In the absence of purpose-built frameworks, as the military campaign drags on, emergency measure runs the risk of hardening into established practices as platform norms kept being written in California or Brussels with no input from the Gulf. With the capital, regulatory agility, and incentives of the GCC states, a coordinated standards-based approach will aid the region’s AI development and governance ambitions. The window is open to become a norm-setter rather than consumer of norms set elsewhere.

Siddharth Yadav is a Fellow in Emerging Technologies at ORF Middle East